| Home | Game | Rules | Theme | Strategy | FAQ |

|

Game designThe first test game Find the Bug! became very popular in the test community. Being a game focused on V-model testing, it naturally gave rise to questions about games more towards agile testing. However, since I didn't have much experience in agile testing, nor any good ideas about how to turn agile methodology into game mechanisms, I didn't pay much attention to that. But then came several inspiration sources to me at the same time. One was a negative experience of an agile project, where the test-driven methodology was abandoned in favor of a forced and undocumented delivery of untested code, since defects could be put on a backlog anyway. Another was the brilliant but sadly underrated game Alchemist, which used simple mechanisms to capture the idea of mixing potions towards a predefined goal. This is very similar to how test-driven development aims at testing code until a predefined test case passes. As if this wasn't enough, I was asked to prepare a speech about how games can be used to teach testing to submit to the test conference EuroSTAR, using Find the Bug! as a physical illustration. Wouldn't it be great to show games both for V-model testing and agile testing? Last but certainly not least, the next contest at The Game Crafter was the Learning Challenge: design a game with a learning objective. Find the Bug! Agile simply screamed to get designed! The initial idea was very simple. Collect codes (cubes) and create components (tiles), similar to how potions are created in Alchemist. Then spend them on other players' user stories (cards) to test for the right combination of codes, similar to how you guess combinations in Mastermind. Failed tests would give codes to the product owner while passed test cases would give victory points - the more integrated user stories, the more victory points. Just like Find the Bug!, this agile cousin would combine fun game mechanisms with good learning points.

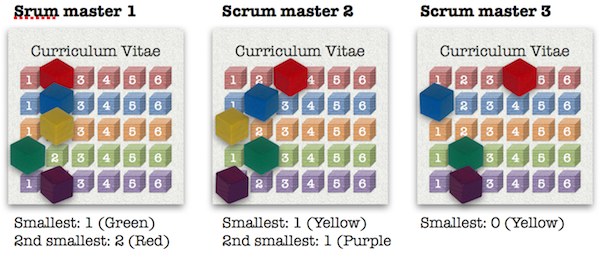

But in spite of the simple idea there were many questions that needed to be answered. How many different codes should there be? How many codes should be used as input and how many codes should be used as output? How should the game encourage a natural flow, where players set up use cases for each other? How should the game be balanced, so that the tester and the product owner both get a fair share of the reward? As often before, I resorted to a spreadsheet to answer the questions. After some iterations, I decided to proceed with five colors in total, of which two may be used as input and two as output. The rationale behind the number of colors was that five colors can be paired in twenty combinations (if Color 1-Color 2 and Color 2-Color 1 are counted as two separate combinations), which is a reasonable number of user stories for a short game. The rationale behind the two input-two output mechanism was to make the individual combinations equally attractive (who would want to use 3 codes as input and only get 1 code as output?) and make it easier to split the reward between the tester and product owner by letting them have 1 each.

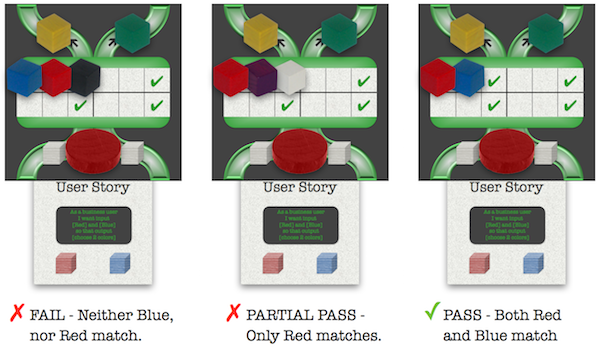

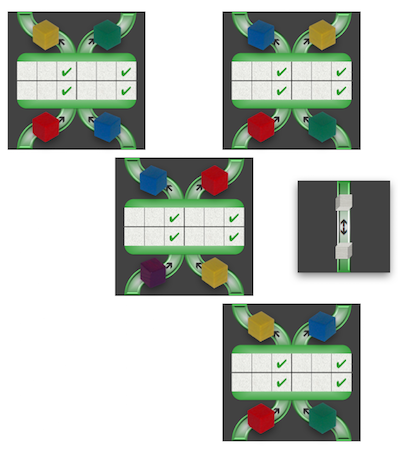

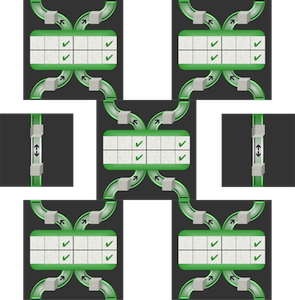

For the actual testing or the guessing game, I used the Master Mind mechanism of letting the product owner give clues about the results (fail if all are wrong and partial pass if only one of the two is wrong). The decision to reward the product owner with codes and the tester with victory points was a design decision supported by simulations. The design decision allowed two different strategic paths and the simulation ensured that the two paths were balanced. (See Rules for the details.) For the integration of components into modules, I wanted to create the image of a growing system where more and more data flows in and out. By requiring the components to be arranged in distorted columns, each component would have to feed and be fed by two other components. However, such a component would become wider and wider, requiring more input but also giving more output, and creating an incentive to simply hoard codes until the other players have prepared the components for you to integrate.

This turned out to be more difficult than expected. First I considered a hand size limit of six, a number often seen in other games (like Tigris & Euphrates). This would allow the players to have at least one of each color and only allow three components to be executed, since each component requires two input codes each. This seemed to solve the problems but was not very elegant. A golden game design rule says the gameplay should be restricted not by rules but rather by strategy. For this game, I would have to find a mechanism that would allow code hoarding but make it bad. I found three. First, I introduced the ability to copy other players' codes. The more codes you hoard, the more do you help the other players replenish their codes. Second, I increased the reward for product owners and testers to be given once their components get integrated. This would give them an incentive to deliver components in time and with the right quality (i.e. that can be integrated). Not only an elegant solution to the game problem but also a thematic one! Third, I made it easier to integrate modules without too many codes. The more "missing links" in the module, the more open input slots to put codes in. Again, the theme helped me find the solution. Real test environments uses stubs where components are missing so why couldn't I do the same? By letting stubs skip one level and link components with one level between them, codes at the first level could flow through many more levels.

With that, I could focus on tests and rule reviews. The components were fairly few and simple; the codes could be represented by wooden cubes for a more concrete test feeling and the components only had to show input and output. I decided to stick to the black and green color scheme of Find the Bug! and reuse the labyrinth-like pipes from Mice in a Maze. I even got to reuse the first but rejected bug image for Find the Bug! by adding longer legs to it to make it look more "agile".

Game components

|

Annotated gamesComplete test games are presented under Annotated Games.

If you like those game mechanisms, I can also recommend:

|