| Home | Game | Rules | Theme | Strategy | FAQ |

|

Game designThe first ideaFind the Bug! - Project is a good example of a game design that evolves from both mechanics and theme. The roots of the game stretch back in time to Politburo, a game of which I wrote the following: "The inspiration to Politburo actually came from my professional work, where political differences often delays or even prevent work from getting done. The step to the totalitarian theme of my two previous micro games Comrade and Gulag was surprisingly small. The initial idea was to have a project with three different priorities, time, cost and quality, and where each player would want to prioritize one or several areas to score the most but where all areas must score something or the entire project would fail (causing all players to lose), resulting in a blame game." That simple idea fit the black humor of the Comrade series very well and the project game was shelved. However, many years (and games played) later, the idea of designing a more serious game about projects returned to me. Kanban showed me that you can design a fun game about project deliveries and Food Chain Magnate showed me that it can be fun to manage organizational hierarchies in a game. Even such different games as Concordia and Carpe Diem helped with ideas. The former gave the idea of playing different cards and spending a turn to "reset" and getting cards back. This would create a balance between many weak cards with few resets and few strong cards with many resets. The latter gave the idea of using those reset turns to place "seats" between goal cards (steering group cards) to "influence" which steering groups to evaluate future projects. This would give players a hint of which priorities to focus on. What would be the objective of a project game then? To succeed with projects. How would you measure success? Against the time, cost and quality budgets. Who decides the budgets? The steering group. Thus, in a project game the players would assume two roles to optimize the "victory points" (the budget margins): as project managers, they would supply the optimal mix of "resources" (time, cost and quality), and as steering group members, they would adjust the "demand" of resources (the project budget). To make things more complicated, they would participate in each others' project and sometimes cooperate but most often compete with scarce resources and conflicting objectives. A typical game decision would look like something like this. I have a team of developers and testers. There are some upcoming projects with a tight time budget. There are also steering group members willing to prioritize time higher than cost. Perhaps I should send a developer on training (and increase her cost but in exchange get a senior tester delivering on less time). Then I should also make sure that the steering group will reward time more than cost and perhaps cooperate with some other players. This sounds like a game decision that's both interesting and thematic. Turning the idea into a gameSo far so good, but how to turn this into a playable game? Imagine the scoring procedure if you would first have to compare the time spent with the budget to get the total victory points for time, then assess each player's time efficiency, then allocate the individual victory points based on this time efficiency, then multiply the score with a priority factor to indicate time's relative priority, and finally repeat for the cost and the quality. Such a game would need an accompanying spreadsheet! This challenge nearly stopped the game until the breakthrough came. Why not skip some of the steps by listing both time and time efficiency to the action? Say that you play a senior developer to a project. Such a role would increase the cost of the project but also the time efficiency by the project. When the project is completed, there is no need to calculate an exact time efficiency, only to check if the time budget was met and if so, exchange each player's time efficiency tokens for victory point tokens. The next challenge was how to incorporate quality. Should it simply be treated as the same kind of resource as time and cost and thus rewarded with "quality efficiency"? This could work but felt a bit dull and almost solvable. After all, the Find the Bug series is about finding bugs so the game should make this part a bit more interesting. How about letting testers add not quality but tests with the ability to detect random bugs? After some iterations, I came up with the following basic project model:

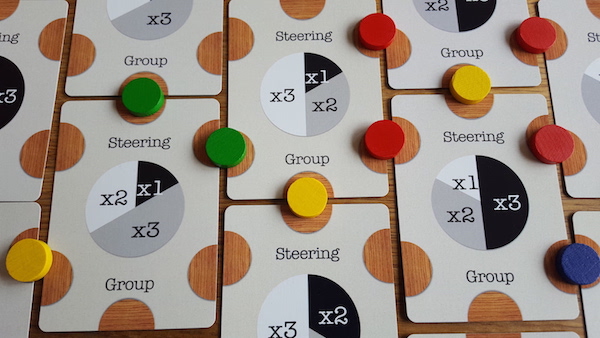

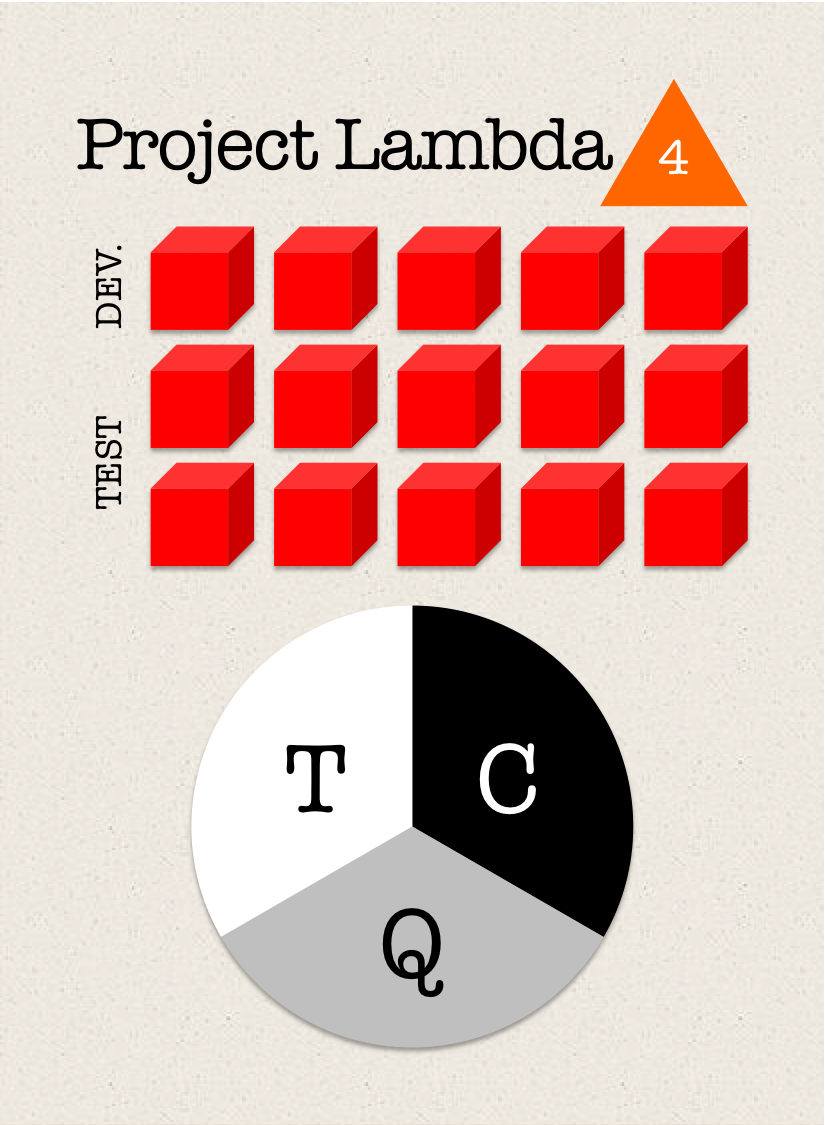

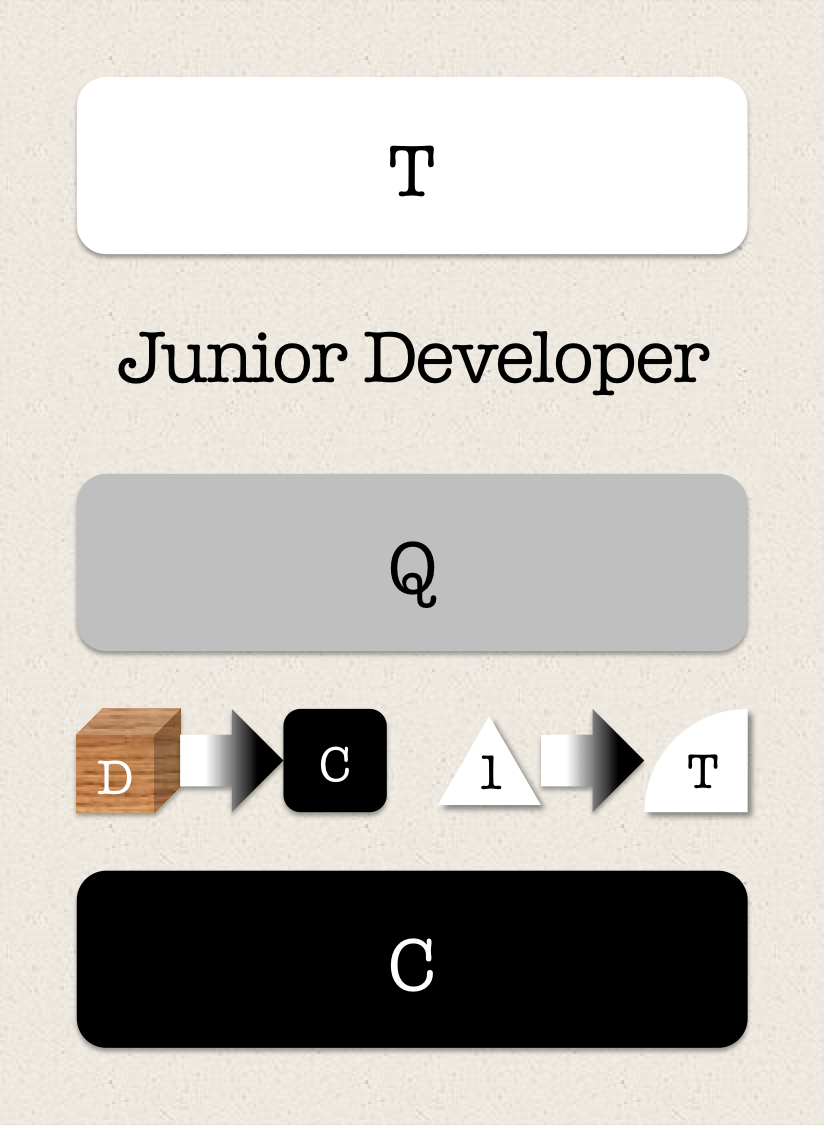

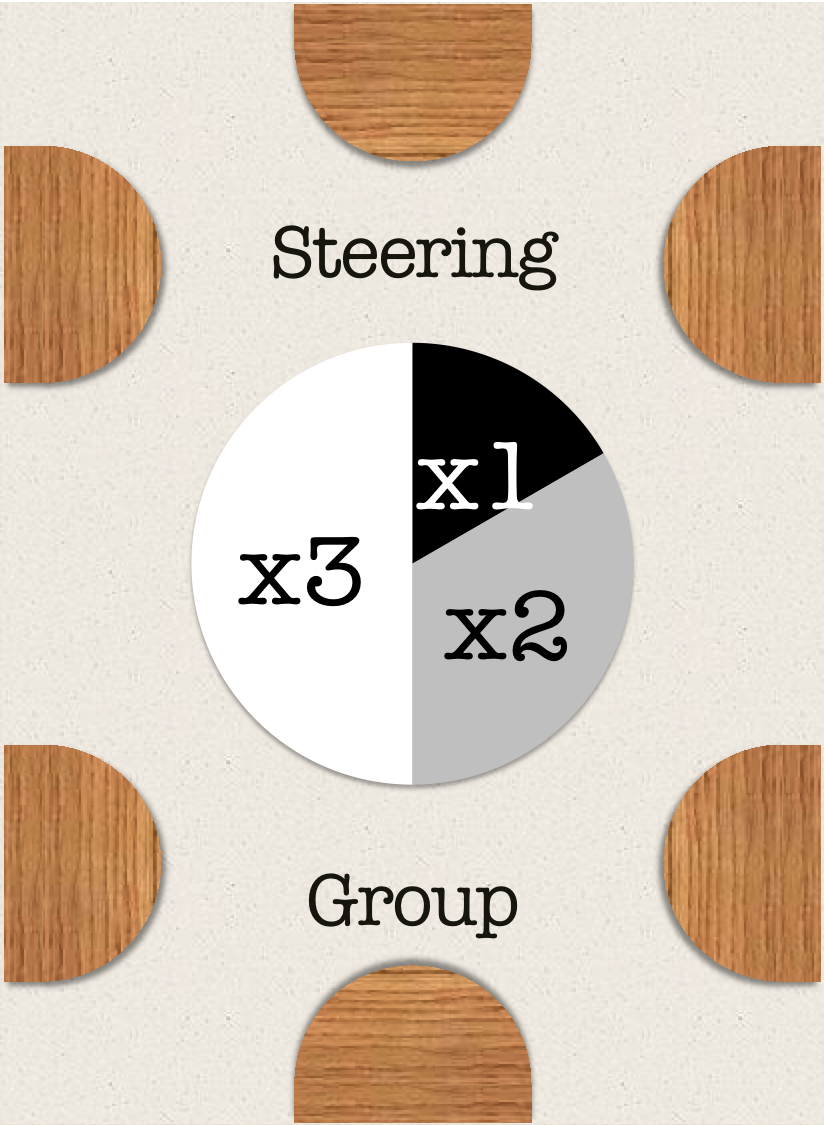

Thus, in the basic project, time and cost will be spent exactly according to budget to deliver a quality exactly according to budget. Say that the project spends 1 extra time unit on test. This will give all the players +0.5 VP (since 0.5 additional defects can be expected to be detected. However, the players investing in time efficiency will lose 1 VP (since the time spent exceeds the budget by 1) and thus get a net loss of 0.5. This will create exactly the kind of tension between different project interests that the game aims at simulating! The below cards illustrate the basic game flow. The first (project) card shows a project with 5 development cubes and 10 test cubes. The initial risk is 2 out of 5, which equals to 4.5 bugs on average, and the budget is 5/5/8 (time/cost/quality). To increase the variability, the budgets are half-sized cards that are randomly allocated project cards and the risks are triangular chits that may change during the game. The second (project member) card shows a developer that may be assigned to the project. This will let the player take 1 development cube (D) and place it in the cost reward box and add 1 to the time budget. When the project is completed, the cube is converted into victory points, depending on how well the cost budget was met and how much the steering group prioritized cost. The third (steering group) card shows a steering group that prioritize time first (triple the victory points), then quality (dobule the victory points) and last cost (no multiplier).

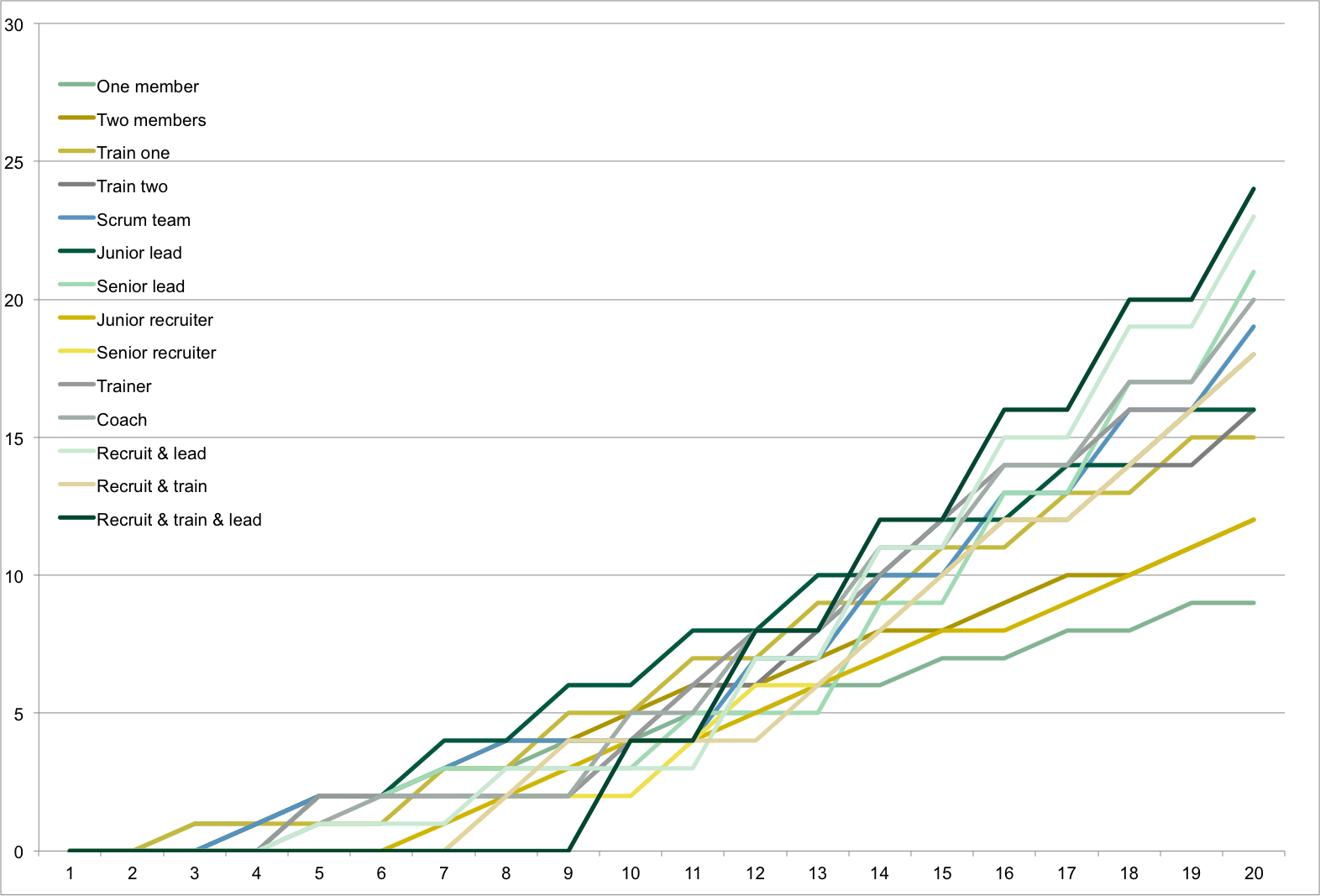

Balancing the gameThe next challenge was to balance the game by ensuring that the different strategic paths would be worth an equal amount of cubes, leaving it to the player interaction to tell the players apart (who manages to set up a team that best avoid competition, who manages to secure steering group priorities that best match projects and so on). Some designers argue that testing should balance the game but as a professional in the testing business, I know that testing cannot create quality. Instead, I had to come up with conditions for a simple simulation table and feed different strategies into it. Assuming that each project requires 10 cubes to be taken (5 development and 5 test) and that the number of projects equals the number of players, each strategy would have to return 10 cubes in about the same time. After some further iterations, I came up with the following results after 15 turns (where "X" equals reset):

The iterations answered many questions in relatively little time. "Vanilla strategies", where you only play with 1 or 2 project members, return 7-8 VP and are inferior to more elaborate strategies, which return 10-12 VP. The strategies in the lower end of the VP range return VP early and are likely to benefit from the best projects before the strategies in the higher end get their VP engines running. Some other important learnings from the iteration were that the leads need 1 cube to be trained and the scrum/devops teams need 2 cubes to be formed (or else they would be too strong) while the trainers and recruiters need to become senior automatically (or else they would be too weak). They also helped assessing the average number of steering group seats given to the players and thus the suitable number of steering group cards. The iterations could easily be extended to analyze shorter and longer games to capture obvious imbalances.

Testing the gameNaturally, not even the best iterations can replace testing but the important conclusion was that the core of the game was balanced and that any imbalances would have to be created and exploited by the players by competing in budget areas, manipulating the game time etc. Thanks to the iterations, I already had different strategies to pursue in the testing. The players first built up different teams with different strength and then went on to select projects and steering groups that would give them the most return for the effort. As designed, time was critical in the early projects, when the teams were mostly junior, and cost in the later projects, when the teams were mostly senior. The game worked but felt a bit too much like an engine building game without drama, e.g. players would just run their engines till the game end and then count VP. This observation inspired several tweaks that not only gave the progressive gameplay more peaks and troughs but also felt thematic.

Those tweaks turned the game from a balanced optimization exercise to a game of intertwined strategic and tactical paths where the players must keep an eye on each other to find the best ones. Find the Bug! - Project may be less accessible than Find the Bug! and Find the Bug! - Agile but it's also more challenging. Game components

|

Annotated gamesComplete test games are presented under Annotated Games. If you like those game mechanisms, I can also recommend:

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||